The world today runs on digital systems. From banks and hospitals to universities, transportation systems, businesses, and governments, almost every activity depends on computers and networks. While technology has improved communication, productivity, and innovation, it has also created opportunities for cybercrime. Hacking, once considered an activity performed only by highly skilled programmers sitting alone in dark rooms, has now become a global issue affecting millions of people and organizations. In recent years, the growth of Artificial Intelligence (AI) has transformed the field of hacking in both positive and negative ways. AI has become a powerful tool that can strengthen cybersecurity, but at the same time, it can also be used by hackers to perform more intelligent, faster, and more dangerous cyber attacks.

Hacking refers to the process of gaining unauthorized access to computer systems, networks, or digital devices. Traditionally, hacking required extensive technical knowledge, programming skills, and deep understanding of operating systems and network protocols. Early hackers were often curious programmers who explored systems to understand how they worked. Over time, hacking evolved into a more organized activity involving financial fraud, identity theft, cyber espionage, ransomware attacks, and even cyber warfare. Today, hacking is no longer limited to individuals with advanced technical expertise because modern tools and AI-powered systems have simplified many attack methods.

Artificial Intelligence is a branch of computer science that enables machines to simulate human intelligence. AI systems can learn from data, identify patterns, make decisions, and improve their performance over time. Technologies such as Machine Learning, Deep Learning, Natural Language Processing, and Neural Networks are major components of AI. These technologies are widely used in industries such as healthcare, education, banking, transportation, and entertainment. However, cybercriminals have also started using AI to automate attacks, analyze vulnerabilities, bypass security systems, and manipulate human behavior.

One of the major reasons AI has become attractive to hackers is automation. In the past, hackers needed to spend a significant amount of time manually scanning systems for weaknesses. Today, AI tools can automatically scan thousands of devices, websites, and servers within minutes. AI algorithms can quickly identify weak passwords, outdated software, open ports, or vulnerable applications. This allows hackers to launch attacks on a much larger scale than before.

For example, consider a hacker trying to attack a company website. Traditionally, the hacker would manually test different parts of the system to identify vulnerabilities. With AI, the hacker can use automated scanning tools that continuously analyze the target system and detect weak points within seconds. If the AI system discovers an outdated plugin or software version, it can automatically suggest an attack method or even execute the exploit without human intervention. This significantly increases the speed and efficiency of cyber attacks.

Phishing attacks are another area where AI has changed hacking techniques. Phishing is a method where attackers trick people into revealing sensitive information such as passwords, bank details, or personal data by sending fake emails or messages. Earlier phishing emails were often poorly written and easy to identify because of spelling mistakes and suspicious formatting. However, AI-powered language models can now generate highly realistic emails that appear professional and convincing. These emails can imitate the writing style of banks, companies, teachers, or even friends and family members.

Imagine a student receiving an email that appears to come from the college administration asking them to verify their login credentials for examination registration. The message contains proper grammar, official logos, and personalized information such as the student’s name and course details. Since the email looks genuine, the student may unknowingly provide their credentials, giving attackers access to their account. AI makes these phishing attacks far more convincing and difficult to detect.

AI is also being used in social engineering attacks. Social engineering involves manipulating human psychology to gain unauthorized access to systems or confidential information. Hackers study human behavior and exploit emotions such as fear, urgency, trust, or curiosity. AI systems can analyze social media profiles, online activities, and communication patterns to create detailed profiles of individuals. Using this information, attackers can craft highly targeted attacks.

For instance, if a hacker notices through social media that an employee recently attended a technology conference, the attacker might send an AI-generated email pretending to share conference materials or presentation files. Since the email is related to the employee’s interests and recent activities, the victim is more likely to trust it and open malicious attachments or links.

Another dangerous development is the use of AI in password cracking. Passwords remain one of the most common methods of authentication, but weak passwords are easy targets for hackers. AI-powered tools can analyze patterns in password creation and predict likely combinations. Instead of trying random passwords one by one, AI systems learn from previously leaked password databases and identify common habits used by people when creating passwords.

For example, many users create passwords using their names, birthdays, favorite sports teams, or simple number patterns such as “123456” or “password123.” AI can quickly recognize these trends and generate probable password combinations. This makes brute-force attacks faster and more successful. If a user creates a password like “Ravi2002,” AI systems can predict similar variations such as “Ravi@2002” or “Ravi123” within seconds.

Deepfake technology is another powerful example of how AI can support hacking and cybercrime. Deepfakes use AI to create realistic fake audio, video, or images that appear genuine. Cybercriminals can use deepfakes to impersonate business executives, politicians, or family members. In some cases, attackers have used AI-generated voice cloning to trick employees into transferring money or revealing confidential information.

Consider a situation where a company accountant receives a phone call from someone who sounds exactly like the company CEO. The caller urgently requests a financial transfer for a confidential project. Since the voice appears authentic, the employee may follow the instructions without suspicion. In reality, the voice was generated using AI-based voice cloning software. Such attacks demonstrate how AI can blur the line between reality and deception.

AI has also increased the effectiveness of malware. Malware refers to malicious software designed to damage systems, steal data, or disrupt operations. Traditional malware follows fixed instructions, making it possible for antivirus software to detect known patterns. Modern AI-powered malware, however, can adapt its behavior based on the environment it encounters. This type of malware can avoid detection by changing its code, hiding its activities, or delaying execution until security systems become inactive.

For example, an AI-powered malware program may remain inactive while it detects antivirus scanning activities. Once the scan is complete, the malware activates and begins stealing files or encrypting data. Some AI-based malware can even learn which security measures are being used and modify its attack strategy accordingly. This makes detection and prevention far more difficult for cybersecurity professionals.

Ransomware attacks have become one of the most profitable forms of cybercrime, and AI is making them even more dangerous. Ransomware encrypts a victim’s files and demands payment for decryption. AI can help attackers identify high-value targets such as hospitals, banks, universities, or government organizations. By analyzing network traffic and organizational data, AI systems can determine which systems contain critical information.

For example, if AI identifies that a hospital’s patient management system is essential for emergency operations, attackers may target that specific system knowing the organization is more likely to pay the ransom quickly. AI can also automate the spread of ransomware within networks, infecting multiple devices simultaneously.

Another important area where AI supports hacking is vulnerability discovery. Software applications often contain bugs or security flaws that can be exploited by attackers. Finding these vulnerabilities manually requires extensive testing and technical expertise. AI systems can analyze large amounts of source code, identify unusual patterns, and detect weaknesses much faster than humans.

Hackers can use AI tools to scan applications for vulnerabilities such as SQL injection, cross-site scripting, buffer overflow errors, or insecure authentication mechanisms. Once vulnerabilities are identified, AI can recommend exploitation techniques or automatically generate attack scripts. This accelerates the entire hacking process and increases the number of potential victims.

AI is also playing a major role in botnet attacks. A botnet is a network of infected devices controlled remotely by attackers. These devices can be used to launch Distributed Denial of Service (DDoS) attacks, where massive amounts of traffic overwhelm a server or website, causing it to crash. AI allows botnets to coordinate attacks more intelligently by adapting traffic patterns and avoiding detection.

For example, during a DDoS attack on an online shopping website, AI can monitor server responses in real time and adjust attack intensity accordingly. If security systems begin blocking traffic from certain regions, the AI can redirect attacks through other infected devices around the world. This dynamic behavior makes AI-powered botnets extremely difficult to stop.

Despite the negative use of AI in hacking, it is important to understand that AI is also a powerful defensive tool in cybersecurity. Security organizations use AI to detect threats, monitor network activity, identify suspicious behavior, and respond to attacks quickly. AI systems can analyze billions of network events in real time and recognize unusual activities that may indicate cyber attacks.

For example, if an employee account suddenly attempts to download massive amounts of confidential data at midnight from an unusual location, AI-based security software can detect this abnormal behavior and alert administrators immediately. Some systems can automatically block suspicious activities before significant damage occurs.

AI-powered antivirus solutions are also becoming more advanced. Instead of relying only on known virus signatures, modern AI security systems analyze behavior patterns. If a program suddenly starts encrypting files, accessing sensitive folders, or communicating with suspicious servers, the AI system can classify it as malicious even if it has never been seen before. This improves protection against new and unknown threats.

Banks and financial institutions increasingly rely on AI to detect fraud and prevent cybercrime. AI systems monitor transaction patterns and identify unusual activities such as sudden large withdrawals, international transactions from unfamiliar locations, or repeated login failures. If suspicious behavior is detected, the system may temporarily freeze the account or request additional verification.

Educational institutions are also becoming targets of cyber attacks because they store large amounts of student data, research information, and financial records. Universities and colleges often face phishing attacks, ransomware incidents, and unauthorized access attempts. AI-based security systems help monitor campus networks and identify suspicious login activities or malware infections.

The rise of AI-driven hacking has also created ethical and legal concerns. Governments around the world are struggling to develop regulations for AI technologies. Since AI tools can generate realistic fake content, manipulate public opinion, and automate cyber attacks, there is growing concern about misuse. Some experts believe AI could eventually enable autonomous cyber weapons capable of launching attacks without direct human control.

For example, imagine an AI system programmed to attack critical infrastructure such as power grids or transportation systems during political conflicts. Such attacks could disrupt entire cities and endanger public safety. This possibility has increased discussions about international cyber laws and responsible AI development.

Cybersecurity experts emphasize that awareness and education are essential in protecting against AI-powered hacking. Individuals and organizations must adopt strong security practices such as using complex passwords, enabling multi-factor authentication, updating software regularly, and avoiding suspicious emails or links. Since AI-based phishing attacks are becoming more realistic, users must verify messages carefully before sharing sensitive information.

Companies should also invest in employee training programs because human error remains one of the biggest cybersecurity weaknesses. Even the most advanced security systems can fail if employees unknowingly provide attackers with access credentials. Training helps users recognize phishing attempts, suspicious attachments, and social engineering tactics.

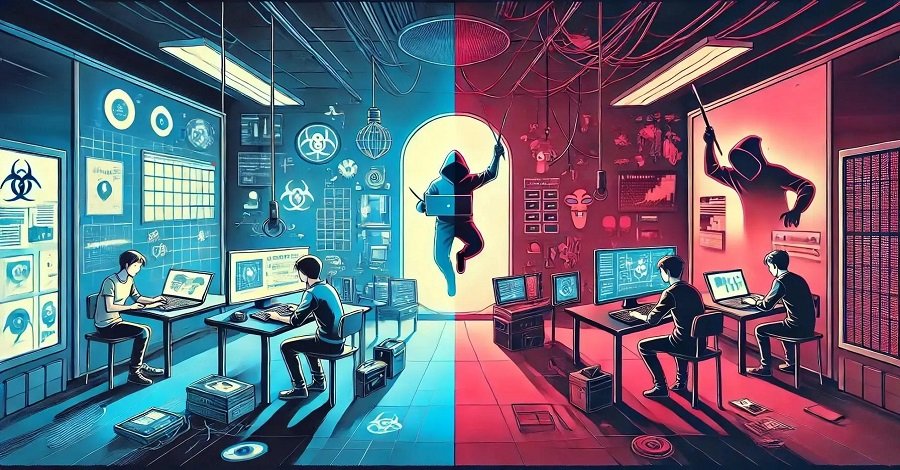

Another important strategy is ethical hacking. Ethical hackers, also known as white-hat hackers, use their skills to identify and fix security vulnerabilities before malicious hackers can exploit them. Many organizations hire ethical hackers to perform penetration testing and security assessments. AI tools are increasingly being used by ethical hackers to improve defensive capabilities and strengthen cybersecurity systems.

For example, an ethical hacker may use AI to simulate attacks against a company network and identify weak points. The organization can then fix these vulnerabilities before real attackers discover them. This proactive approach helps reduce the risk of cyber attacks.

Governments and international organizations are also working to strengthen cybersecurity frameworks. Countries are establishing cybercrime units, digital forensics laboratories, and national cybersecurity policies to combat hacking activities. Collaboration between governments, technology companies, and educational institutions is essential to address the growing threat of AI-powered cybercrime.

The future of hacking and AI remains uncertain. As AI technology continues to evolve, both attackers and defenders will gain more advanced capabilities. Cybersecurity experts predict that future attacks may become more autonomous, adaptive, and personalized. AI could potentially create malware capable of learning from failed attacks and modifying itself automatically to improve success rates.

At the same time, defensive AI systems will also become smarter. Future cybersecurity solutions may use predictive analysis to identify threats before attacks even occur. AI could help create self-healing networks capable of automatically isolating infected devices and repairing vulnerabilities without human intervention.

The relationship between hacking and AI represents a continuous technological battle. Every improvement in cybersecurity leads attackers to develop new methods, while every new attack encourages the creation of stronger defenses. This cycle is likely to continue as technology advances further.

In conclusion, hacking has evolved significantly from its early origins, and Artificial Intelligence has become a major factor shaping modern cyber threats. AI has enabled hackers to automate attacks, improve phishing techniques, crack passwords faster, create adaptive malware, and manipulate human behavior using deepfakes and social engineering. At the same time, AI has also strengthened cybersecurity by improving threat detection, fraud prevention, and automated defense systems. The increasing use of AI in hacking highlights the importance of cybersecurity awareness, ethical technology development, and international cooperation. As digital systems continue to expand across every aspect of society, understanding the relationship between AI and hacking will become essential for protecting individuals, organizations, and nations from future cyber threats.

Here are a few safe and educational code-based examples related to cybersecurity, ethical hacking concepts, and how AI can be involved in security analysis. These examples are intended for learning, awareness, and defensive understanding only.

1. Simple Password Strength Checker Using Python

This example checks whether a password is weak or strong.

import re

def check_password_strength(password):

strength = 0

if len(password) >= 8:

strength += 1

if re.search(r"[A-Z]", password):

strength += 1

if re.search(r"[a-z]", password):

strength += 1

if re.search(r"[0-9]", password):

strength += 1

if re.search(r"[!@#$%^&*()]", password):

strength += 1

if strength == 5:

return "Very Strong Password"

elif strength >= 3:

return "Moderate Password"

else:

return "Weak Password"

password = input("Enter Password: ")

print(check_password_strength(password))

2. Basic AI-Based Phishing Email Detection

This example uses Machine Learning to classify emails as phishing or safe.

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.naive_bayes import MultinomialNB

emails = [

"Your bank account is suspended click here",

"Win a free iPhone now",

"Meeting scheduled for tomorrow",

"Project submission deadline extended"

]

labels = ["phishing", "phishing", "safe", "safe"]

vectorizer = CountVectorizer()

X = vectorizer.fit_transform(emails)

model = MultinomialNB()

model.fit(X, labels)

test_email = ["Click here to verify your bank password"]

test_data = vectorizer.transform(test_email)

prediction = model.predict(test_data)

print("Prediction:", prediction[0])

3. Login Attempt Monitoring System

This example detects multiple failed login attempts.

failed_attempts = {}

def login(username, password):

correct_password = "admin123"

if password == correct_password:

print("Login Successful")

failed_attempts[username] = 0

else:

failed_attempts[username] = failed_attempts.get(username, 0) + 1

print("Wrong Password")

if failed_attempts[username] >= 3:

print("Account Temporarily Locked")

username = input("Username: ")

password = input("Password: ")

login(username, password)This type of monitoring helps prevent brute-force attacks.

4. AI-Based Suspicious Activity Detection

This example shows how AI can identify unusual behavior.

from sklearn.ensemble import IsolationForest

import numpy as np

# Normal login hours

data = np.array([[9], [10], [11], [12], [13], [14], [15]])

model = IsolationForest(contamination=0.1)

model.fit(data)

test = np.array([[3]]) # Login at 3 AM

prediction = model.predict(test)

if prediction[0] == -1:

print("Suspicious Login Detected")

else:

print("Normal Activity")Output

Suspicious Login DetectedBanks and companies use similar techniques to detect unusual activities.

5. Simple Keylogger Awareness Example (Educational Only)

This example demonstrates how keyboard input can be monitored. This is shown strictly for awareness and defensive education.

from pynput.keyboard import Listener

def key_pressed(key):

print(f"Key Pressed: {key}")

with Listener(on_press=key_pressed) as listener:

listener.join()This demonstrates why users should avoid installing unknown software.

6. Simple Port Scanner (Ethical Learning)

A port scanner checks which ports are open on a system.

import socket

target = "127.0.0.1"

for port in range(20, 100):

s = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

result = s.connect_ex((target, port))

if result == 0:

print(f"Port {port} is OPEN")

s.close()Example Output

Port 80 is OPEN

Port 22 is OPENEthical hackers use such tools to identify exposed services.

7. AI Chatbot Used in Social Engineering Simulation

This demonstrates how AI-generated conversations may manipulate users.

responses = {

"bank": "Your bank account needs verification.",

"password": "Please reset your password immediately.",

"otp": "Do not share OTP with anyone."

}

user_input = input("Enter Message Topic: ")

if user_input in responses:

print(responses[user_input])

else:

print("No Response Available")Modern AI systems can generate much more realistic conversations automatically.

8. Face Recognition Security Example

AI is widely used in biometric authentication.

import cv2

face_cascade = cv2.CascadeClassifier(

cv2.data.haarcascades + 'haarcascade_frontalface_default.xml'

)

img = cv2.imread('person.jpg')

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

faces = face_cascade.detectMultiScale(gray, 1.1, 4)

for (x, y, w, h) in faces:

cv2.rectangle(img, (x, y), (x+w, y+h), (255, 0, 0), 2)

cv2.imshow('Face Detection', img)

cv2.waitKey()This type of AI technology is used in surveillance and authentication systems.

9. Detecting SQL Injection Attempts

This code checks suspicious input patterns.

user_input = input("Enter Username: ")

sql_keywords = ["SELECT", "DROP", "DELETE", "--"]

for keyword in sql_keywords:

if keyword.lower() in user_input.lower():

print("Possible SQL Injection Detected")

break

else:

print("Input Accepted")Example

Input:

admin' DROP TABLE users;Output:

Possible SQL Injection Detected

10. AI-Based Spam Message Classification

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.svm import LinearSVC

messages = [

"Congratulations you won lottery",

"Free recharge available",

"Class starts at 10 AM",

"Project meeting tomorrow"

]

labels = ["spam", "spam", "normal", "normal"]

vectorizer = TfidfVectorizer()

X = vectorizer.fit_transform(messages)

model = LinearSVC()

model.fit(X, labels)

test = ["Claim your free reward now"]

test_vector = vectorizer.transform(test)

prediction = model.predict(test_vector)

print(prediction[0])Output

spam